Everyone has a theory about which jobs AI will take first. The consensus — repeated confidently at conferences by people who have just discovered a new statistic — goes like this: AI will hit the bottom of the job market first. The repetitive work. The routine. The people whose entire job could be replaced by a sufficiently motivated spreadsheet.

Tidy story. Largely wrong.

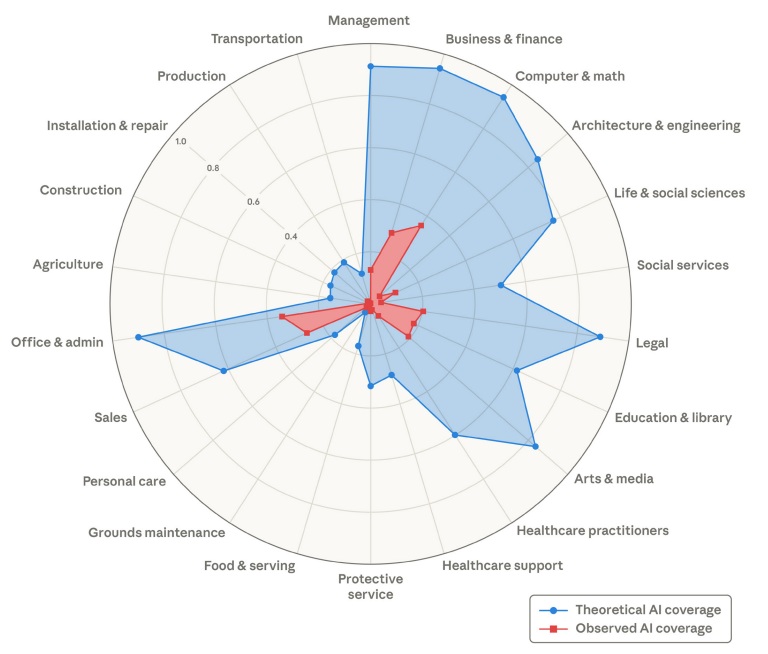

How to Read This Chart

The circle is divided into job categories — Management, Legal, Finance, Computer and Math, and so on around the edge. Think of each category as a spoke on a wheel. The further a shape reaches toward the outer edge of a spoke, the more of that job’s tasks are affected.

The blue shape is what AI could do today if fully deployed across every category. Notice how far it reaches outward — especially toward Management, Business and Finance, Legal, and Office and Admin. These are not fringe functions. They are the ones that run most organisations.

The red shape is what AI is actually doing in workplaces right now. It sits tight at the centre. Small. Compressed.

That gap between blue and red is the entire point of this essay.

Most people look at the red shape and feel reassured. The researchers who produced this data — economists working inside Anthropic, with access to millions of real professional AI interactions — read it differently. The red shape is not evidence that the risk is small. It is evidence that the clock has not yet run down.

The bottom half of the chart — Food and Serving, Construction, Personal Care, Grounds Maintenance — barely registers in either colour. These are the jobs that dominate the public conversation about automation. The chart suggests that conversation is looking in the wrong direction.

The Source: People Who Actually Know

This is not commentary from outside observers with a newsletter and a point of view. The research comes from inside Anthropic — the company that builds Claude, one of the most widely used AI systems in the world. The authors, Maxim Massenkoff and Peter McCrory, are economists working inside the machine itself. They have access to data no independent researcher has: millions of real professional interactions with AI, across hundreds of job types, tracked over time.

When researchers who build and study AI at this scale publish findings about where it is actually being used — and where it is heading — it is worth more than a glance. This is not speculation from the outside. It is a report from the engine room.

Their paper, published in March 2026, looks at not just what AI could theoretically do, but what it is actually doing in workplaces today. The findings are, to put it gently, inconvenient for the standard story.

The Wrong People Are Worried

The workers most at risk from AI earn, on average, 47% more than those with little or no risk. They are nearly four times more likely to hold a graduate degree. They work in Management, Business and Finance, Legal, and Technology.

In other words: the people filling the conference rooms where everyone is worrying about other people’s jobs.

The bartender is fine. The strategist should perhaps read on.

At the far end of the list, barely registering: cooks, motorcycle mechanics, lifeguards, bartenders. Physical work. Hands-on work. Work that requires a human body in a specific place. These are the jobs that dominate public anxiety about automation. The data says they are among the safest.

Which raises a fair question about who has been setting the agenda for this conversation — and whose discomfort has been conveniently left out of it.

Two Conversations. Only One Is Happening

There are two conversations about AI and jobs running at the same time. One is loud: it is about workers further down the income scale, it features in policy speeches, and it generates a great deal of concerned commentary from people who are not, themselves, at risk. The other conversation is quiet — nearly silent — and it is about the analytical, advisory, and decision-making work that fills the top floors of most organisations.

The loud conversation is about the less affected group. The quiet one is about the more affected one.

Legal is a useful example, and not just because lawyers charge by the hour so their time is presumably too valuable for existential reflection. The research shows that legal work — linguistically complex, document-heavy, built on precedent — sits among the most AI-ready job categories in the economy. Yet the legal profession remains one of the most resistant to seriously asking what this means. Liability, they say. Professional standards. Accuracy. All fair points. None of them permanent.

Resistance is not the same as protection. Ask anyone who has tried to stop a software update.

The Gap Is Not a Comfort

In technology and computer roles, AI could assist with 94% of tasks if fully deployed today. The share actually being handled by AI right now: 33%. Most people read that gap and feel relieved. The researchers read it differently.

That gap is closing. The things slowing it down — organisational caution, legal constraints, habits — are not walls. They are speed bumps. The 61% not yet touched is not permanently safe. It is just next.

One data point in the paper deserves more attention than it gets. Since 2024, companies have been hiring fewer people aged 22 to 25 into the roles most affected by AI — down roughly 14% compared to 2022. No such shift for workers over 25. The people who would have spent the next decade learning on the job, building experience, and eventually stepping into senior roles are finding fewer doors open. This is not yet a crisis. It is the kind of early signal that seems obvious only after the fact.

So — Is AI Coming for Your Job?

If your work is physical, place-based, or involves making the actual coffee rather than scheduling the meeting about the coffee strategy — you are probably safer than the headlines suggest.

If your work is about thinking, advising, deciding, or explaining — AI is already doing parts of it somewhere. The question is not whether that will reach you. It is whether your organisation has thought about what to do when it does.

The organisations that navigate this well will not be the ones that moved fastest or spent the most. They will be the ones that asked the less comfortable question first — and asked it about themselves, not about someone else.

Three Things Worth Taking Away

1. The risk is closer to the top than the bottom. The most AI-affected workers are your most educated, highest-paid knowledge workers. If your organisation’s AI risk conversation is focused entirely on operational and frontline roles, it is focused in the wrong direction.

2. The gap between what AI can do and what it does today is closing. Slow adoption is not the same as no adoption. The functions most resistant to change — Legal, Finance, Management — are also among the most ready for AI to move into. Caution buys time. It does not buy safety.

3. The next generation of leaders is getting fewer chances to learn. Young workers are already finding fewer entry points into knowledge-intensive careers. This will not show up as a leadership problem today. It will show up in ten years. The organisations paying attention now will be the ones with something to show for it.